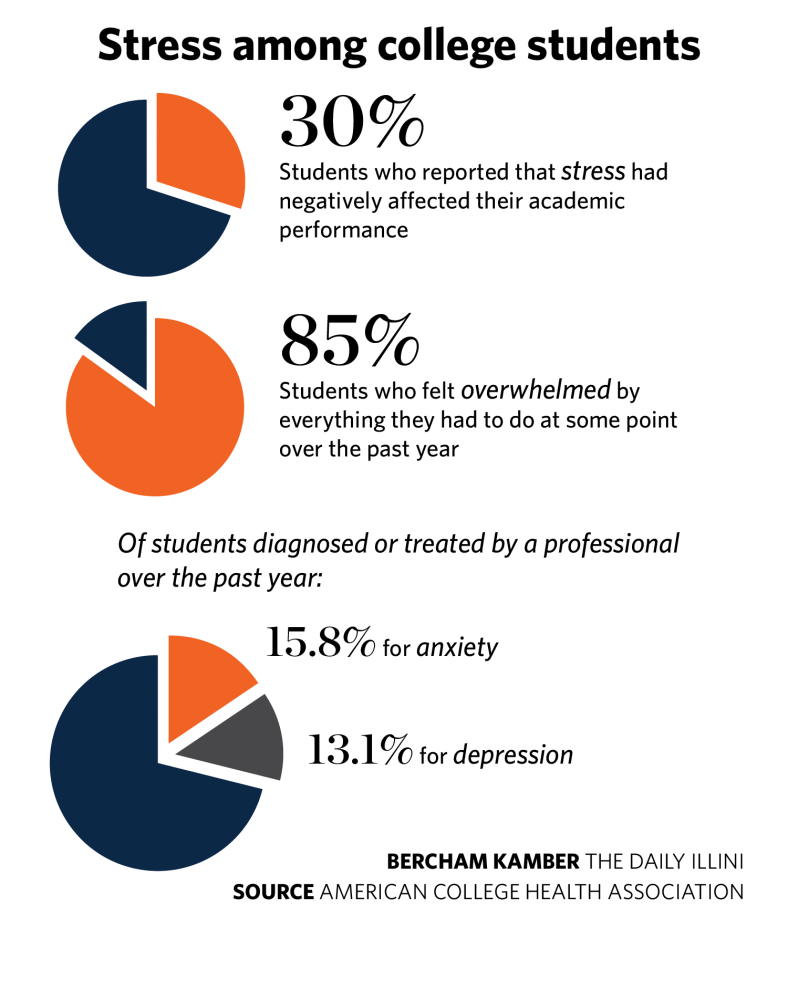

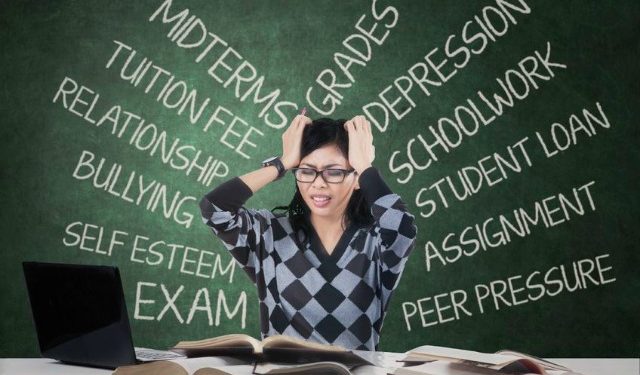

In the United States, almost 10% of college students have been diagnosed with, or treated for depression and that number is increasing. Mental health issues such as stress, anxiety, and depression are among the health information most searched for by teenagers online, and online content often motivates young people to change their health behaviors. It is important for us to examine this rising situation amongst college students and offer a solution.

The increase in anxiety and depression among college students versus the services offered is overwhelming student health services at universities. Furthermore, some students do not have the access to traditional mental health services and are unable or unwilling to seek treatment due to social stigmas. Academics and going off to college are primarily perceived as a positive challenge, however if viewed negatively by the student, the stress can be detrimental to a student’s health and well-being. (Beiter) For example, Franciscan University of Steubenville, Ohio conducted a study on their students’ mental health and found that many of the students were experiencing extreme depression, anxiety, and stress. This illustrates the pattern and the need for universities to implement a systematic and continuous method to monitor the mental health of its students. They suggest that this type of monitoring, along with increased availability of programs, would allow universities to evaluate the mental health needs of their students and to improve their existing counseling programs. (Beiter) It is important to be able to study exactly what issues are prominent. For example, negative perceptions of body image which can contribute to low satisfaction in life, along with low self-esteem which may result in low social, emotional, and learning function. To combat issues like these and others amongst college-age students and to have a greater impact, we need a broad reaching solution.

There is some evidence that the first generation of mental health digital interventions can be effective for conditions such as anxiety and depression. The interventions, powered by artificial intelligence (AI) can be effective for anxiety and depression because they provide an engaging tool that is designed to feel like the users are speaking to a real human being and mimics human dialogue. (Kretzschmar) Chatbots process all text and emojis that a user might enter and offer responsive, guided conversations and advice to help users cope with challenges to mental health. They also offer daily check-ins on users’ emotions, thoughts, and behaviors. (Kretzschmar) While no substitution for in-person therapy, these tools may help to assist in reversing the negative thinking processes that drive depression and anxiety. College students could benefit from the use of mental health chatbots and platforms because of the ease of access. At a time where young people and college students are media and tech savvy individuals, they could easily utilize these services. (Kretzschmar) Essentially, chat bots could aid in preventing the rising cases of depression and anxiety amongst college students and young people.

Digital interventions are accessible to anyone with a smartphone and internet connection and can be delivered to young people in regions that lack mental health professionals and where mental illness often goes untreated. Mental health apps are readily accessible and easy to use whenever users feel sad, anxious, stressed, or just need a distraction. (Kretzschmar) They are also significantly cheaper than face-to face interventions such as cognitive behavioral therapy. Young people are often hesitant to seek out mental health therapy due to social and self-stigmatizing attitudes toward mental health interventions. There is also evidence that younger teens tend to feel more in control of managing difficult situations in online conversations via text rather that in-person interactions. (Kretzschmar) Another common barrier is trust and confidentiality. Many young people feel that their problems are too personal to discuss with an adult or with anyone who may divulge sensitive information with others. These individuals may find that a digital intervention or app that is used anonymously would be a better outlet to “discuss” their issues. It is also possible that as they use chatbots and other resources that they learn about alternative approaches and develop skills to be able to recognize when they need additional support and may become more open to speaking with a human being. In addition, if a student does speak with a mental health professional face to face first, mental health apps can still be recommended as a supplement or a form of intermediate support. Besides their 24/7 availability, Bots reduce the fear associated with being judged. It responds to the emotions and evidence-based CBT (cognitive behavioral techniques), meditation, breathing, yoga, motivational interviewing, and micro-actions to help build mental resilience skills. (Dar) Users can also benefit from gaming techniques for example, users can move through carefully designed virtual environments and perform tasks that get progressively harder. “If you look at the societal need, as well as the ability of AI to help, I think that digital mental healthcare checks all the boxes, “Computer Scientist and Professor of AI, Andrew Ng says. (Knight) The performance results can help researchers assess their motivation levels and design treatments to keep them committed.

Chatbots can provide companionship and therapy support. “Younger people are the worst served by our current systems, “says Alison Darcy, a clinical research psychologist who came up with the idea for Woebot, a therapy chatbot whose icon is a robot. Woebot works smoothly thanks to a clever interface and some impressive natural-language technology. (Knight) It can be anonymous if you choose. When I tried it out, I did not enter any personal information. It states up front that no person will see your answers and offers ways of reaching someone if your situation becomes serious. If it does not understand something that a person has typed, it will apologize and explain that it is only a few months old and is still learning. You are then guided through conversations that assess the way you are feeling by the answers that you give. It checks in with you every day at the time of your choosing and directs you through the steps. If you say that you are stressed about work, it will offer ways of reframing your feeling to make them more positive. Alison Darcy, Woebot creator, says that Woebot is effective because a conversation is a natural way to communicate distress and receive emotional support. If after prolonged use, users don’t show signs of improvement or they consistently rate their energy levels as low or use key words such as “sad,” “anxious,” or “depressed,” to describe their mood, Woebot nudges them toward seeking medical help. (Lien) “The idea of therapy is so burdensome and loaded for some people, and we’re not that — we’re not as intensive,” Darcy said. “We have this hope that people will use us and not even realize we’re a mental health tool.”

As stated, there is no substitute for an in-person mental health professional. While chatbots and other mental health platforms provide daily check-ins and can assess mood, they lack EQ (emotional quotient). (Dar) They can interpret answers in depth but cannot sense discomfort, body language and other ways of communicating that only a human can observe. Bots also cannot replace treatment for people with serious conditions. Some people would not want to share personal data with a bot, and there are many who doubt the effectiveness of a machine helping to manage emotional issues versus a professional. Sometimes there are tech issues, like a bot may seem repetitive or there is a break in the communication flow. Some are not effective in detecting and responding to child sexual abuse, drug use, and eating disorders. (Dar) There are also ethical issues involving the collection of data and potential for poor advice. Chatbots and other AI are certainly no substitute for real life therapy, but can it be a helpful solution for a certain population? Can it be helpful for college students to help guide them towards healthy thought processes?

College students and young people can benefit from the use of chatbots and other forms of AI to help with establishing healthier ways of thinking and in helping to manage the symptoms of depression and anxiety. While it is important to stress that chatbots and other mental health platforms are no substitution for in person therapy, they can provide a service that helps mitigate the patterns of thinking that contribute to these conditions. I think that colleges and universities can utilize these methods as ways of checking in with their students and as a possible method in tracking and studying the prevalence of depression and anxiety amongst college age students. I also feel that this can be a tool for reaching individuals who have no access to mental healthcare or live in rural areas. As Andrew Ng says, “If you look at the societal need, as well as the ability of AI to help, I think that digital mental healthcare checks all the boxes. If we an take a little bit of the insight and empathy of a real therapist and deliver that, at scale, in a chatbot, we could help millions of people.”

In the United States, there is a growing problem of depression and anxiety amongst college students and young people. I believe that the use of chatbots and other mental health platforms can be a positive way of helping reduce this issue, while providing a method in which we can study its effects and develop better methods of reaching our youth. The accessibility and anonymity of chatbots make it an effective way to reach this population. While it is no substitute for in-person treatment, it is worth a try to help our youth struggling with depression and anxiety related symptoms.

References:

Beiter, R. Nash, M. McCrady, D. Rhoades, M. Linscomb, M. Clarahan, S. Sammut, The prevalence and correlates of depression, anxiety, and stress in a sample of college students, Journal of Affective Disorders, Volume 173, 2015, Pages 90-96, ISSN 0165-0327 https://doi.org/10.1016/j.jad.2014.10.054. (http://www.sciencedirect.com/science/article/pii/S0165032714006867)

Dar, V. (2019, Dec 15). Chat with a BOT: Technology can be good frontline for mental therapy. Financial Express Retrieved from http://ezaccess.libraries.psu.edu/login?url=https://www-proquest-com.ezaccess.libraries.psu.edu/docview/2326348871?accountid=13158

Knight, W. (2018, Jan). Andrew ng has a chatbot that can help with depression. MIT Technology Review, 121, 15. Retrieved from http://ezaccess.libraries.psu.edu/login?url=https://www-proquest-com.ezaccess.libraries.psu.edu/docview/1990740397?accountid=13158

Kretzschmar, Kira, et al. “Can Your Phone Be Your Therapist? Young People’s Ethical Perspectives on the Use of Fully Automated Conversational Agents (Chatbots) in Mental Health Support.” Biomedical Informatics Insights, Jan. 2019, doi:10.1177/1178222619829083.

Lien, T. (2017, Aug 24). A BOT THAT LISTENS; Woebot aims to give a lift to people who are depressed or stressed. Los Angeles Times Retrieved from http://ezaccess.libraries.psu.edu/login?url=https://www-proquest-com.ezaccess.libraries.psu.edu/docview/1931501239?accountid=13158